Method

Our pipeline combines segmentation, diffusion-based inpainting, and generative insertion with explicit motion modeling for temporally coherent video editing.

Pipeline Overview

- Object Segmentation — SAM2 generates per-frame binary masks for the target object via prompt-guided tracking.

- Diffusion Inpainting — Stable Diffusion inpainting with ControlNet conditioning fills masked regions, generating the replacement object in context.

- Motion-Aware Warping — RAFT optical-flow-derived similarity transforms warp the generated object to match inter-frame motion.

- Compositing — Alpha-blended composition produces the final output video.

Mathematical Formulation

Similarity Transform

Each frame's object pose is described by a similarity transform $$T_t(x) = s_t \, R(\theta_t) \, x + b_t$$, where $s_t$ is the scale factor, $R(\theta_t)$ is a 2D rotation matrix, and $b_t$ is the translation vector.

Optical Flow Warping

Given dense optical flow $F_{t \to t+1}: \mathbb{R}^2 \to \mathbb{R}^2$ between consecutive frames, we warp the replacement object mask:

$$\hat{I}_{t+1}(p) = I_t\bigl(p + F_{t \to t+1}(p)\bigr)$$

Warping-Consistency Error

We measure temporal consistency via the warping error across frames:

$$E_{\text{warp}} = \frac{1}{|\Omega|} \sum_{p \in \Omega} \bigl\| I_{t+1}(p) - \hat{I}_{t+1}(p) \bigr\|_2$$

where $\Omega$ is the set of valid (non-occluded) pixels.

Mask Propagation Quality

We evaluate mask quality using Intersection over Union: $$\text{IoU} = \frac{|M_{\text{pred}} \cap M_{\text{gt}}|}{|M_{\text{pred}} \cup M_{\text{gt}}|}$$ and the Dice coefficient: $$\text{Dice} = \frac{2 |M_{\text{pred}} \cap M_{\text{gt}}|}{|M_{\text{pred}}| + |M_{\text{gt}}|}$$

SAM2 vs. YOLO Mask Propagation

SAM2

- Prompt-guided tracking with memory attention

- Higher IoU on deformable objects

- Slower per-frame inference than YOLO

YOLOv8-seg

- Per-frame instance segmentation

- Faster inference than SAM2

- Lower mask quality on non-rigid objects

Related Work

Limitations Identified by Hayk Minasyan

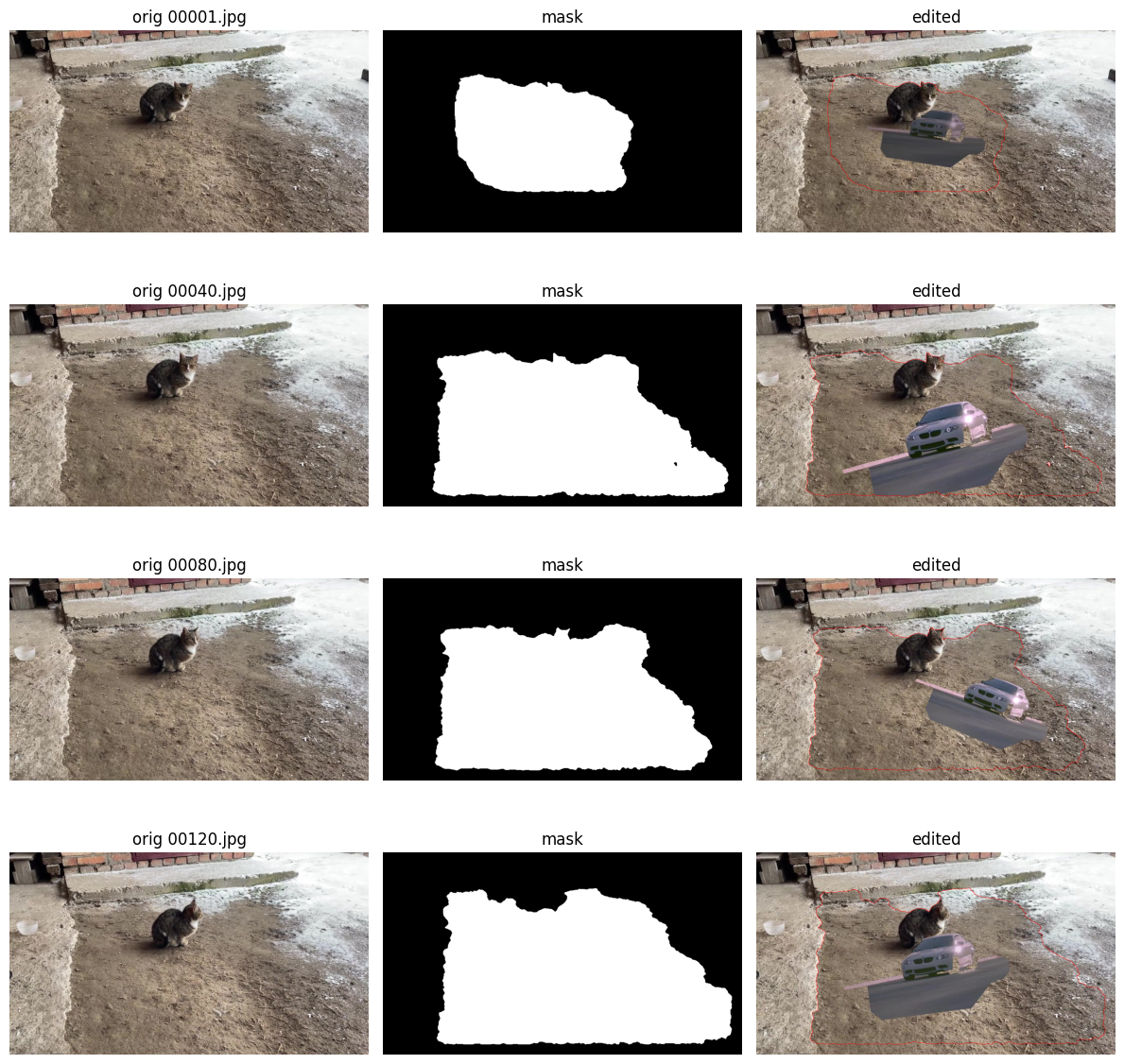

The following video demonstrations, produced by Hayk Minasyan based on the Shin et al. research, expose key failure modes of the per-frame diffusion pipeline when applied to real-world video content. These results directly informed our critical review.

Non-Rigid Motion Failure

The similarity-transform motion model cannot represent articulated or deformable motion, causing severe distortion when applied to non-rigid objects.

Optical Flow Artifacts

RAFT-based warping introduces blurring and stretching under fast or complex motion, with degraded performance on real-content videos compared to synthetic training data.

Supplementary Qualitative Reproduction

As a qualitative supplement to our critical review, we ran an independent notebook experiment reproducing the thesis pipeline. The results below visually confirm the central failure mode discussed throughout the review.

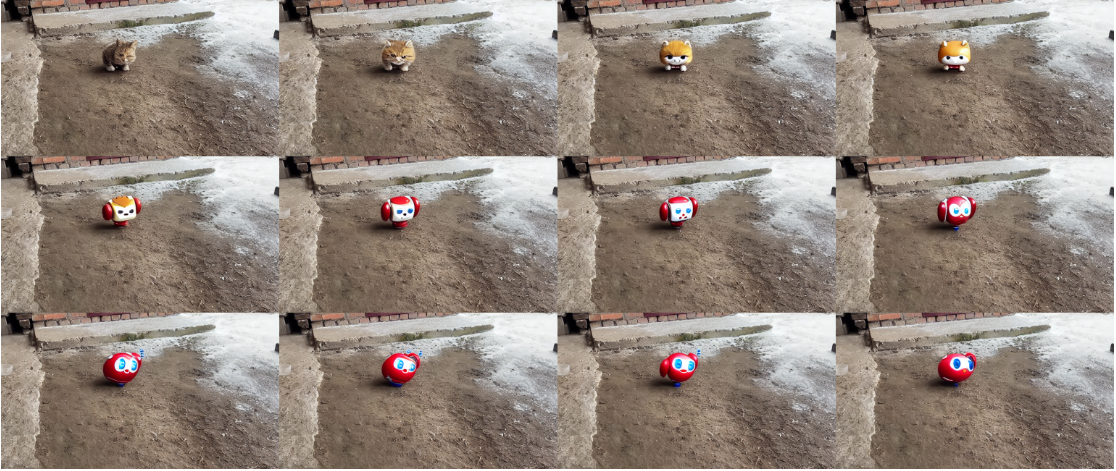

12-Frame Object Replacement Sequence

A small cat in the source clip is progressively replaced by a stylized toy-like character. Coarse spatial localization is largely maintained — the edited object stays in approximately the correct ground-plane region across frames. However, object identity is not temporally stable: the replacement drifts from a cat-like yellow object in earlier frames toward a red, rounded robot-like character in later frames.

This behavior is qualitatively consistent with the review's main critique: auxiliary controls around frame-wise generation can preserve rough spatial placement while still failing to enforce stable cross-frame appearance. The grid supports the argument that coarse motion transfer alone does not guarantee temporal appearance consistency.

Next Steps

- Integrate depth-aware warping for parallax-correct object insertion in 3D scenes.

- Explore diffusion-based video generation models (e.g., SVD) for end-to-end replacement.

- Benchmark on DAVIS and YouTube-VOS datasets for standardized comparison.

- Investigate 3D Gaussian Splatting approaches for improved temporal consistency.